...

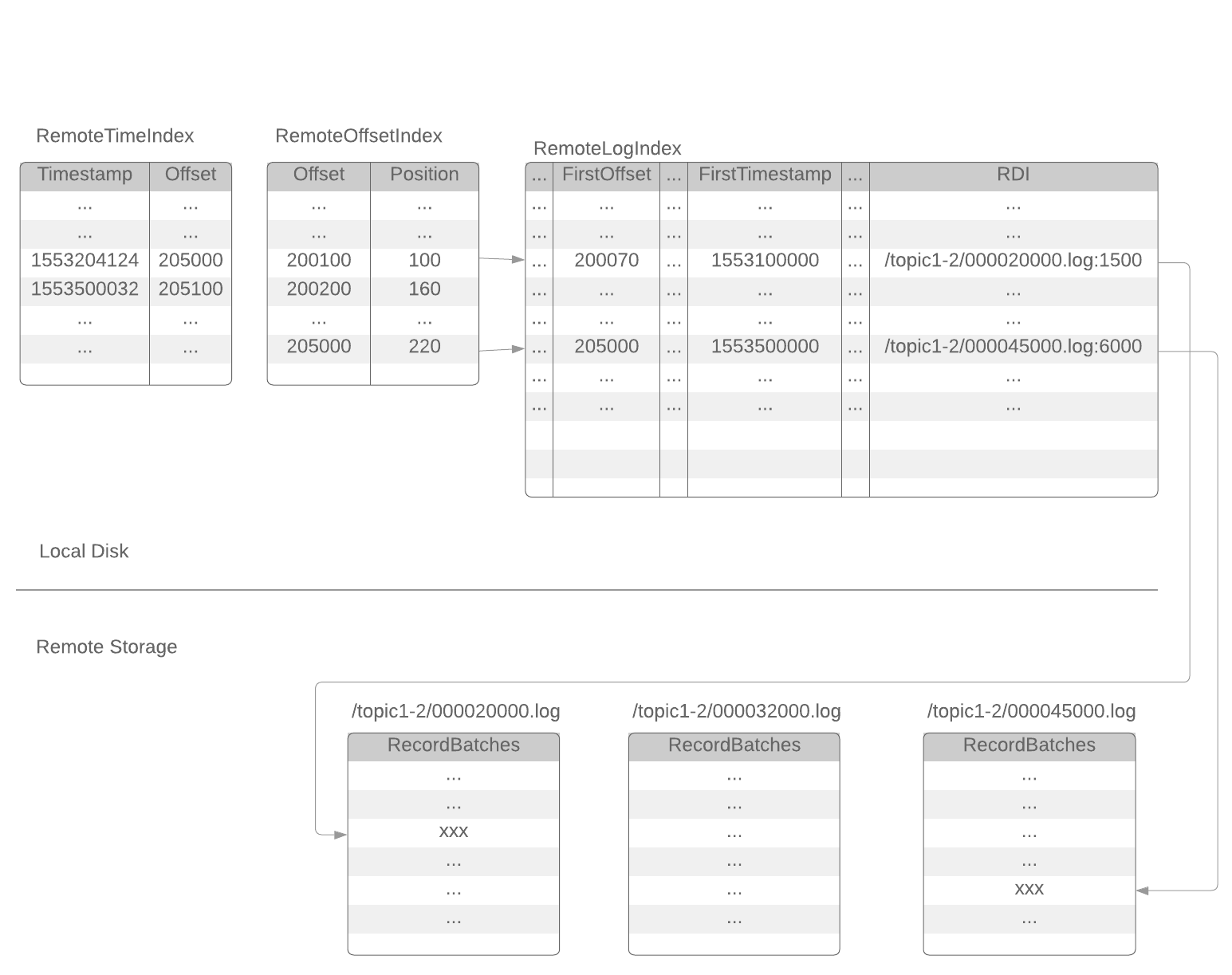

Remoteoffsetindex file and remoteTimestampIndex file are similar with the existing .index file (offset index) and .timeindex file (timestamp index). The only difference is that they point to the index in the corresponding remoteLogIndex file instead of a log segment file.

Performance Test Results

We tested the performance of the initial implementation of this proposal.

The cluster configuration:

- 5 brokers

- 20 CPU cores, 256GB RAM (each broker)

- 2TB * 22 hard disks in RAID0 (each broker)

- Hardware RAID card with NV-memory write cache

- 20Gbps network

- snappy compression

- 6300 topic-partitions with 3 replicas

- remote storage uses HDFS

Each test case is tested under 2 types of workload (acks=all and acks=1)

Workload-1 (at-least-once, acks=all) | Workload-2 (acks=1) | |

|---|---|---|

| Producers | 10 producers 30MB / sec / broker | 10 producers 55MB / sec / broker |

| In-sync Consumers | 10 consumers 120MB / sec / broker | 10 consumers 220MB / sec / broker |

Test case 1 (Normal case):

No remote storage read, and no broker rebuld.

| with tiered storage | without tiered storage | ||

|---|---|---|---|

Workload-1 (acks=all, low traffic) | Avg P99 produce latency | 25ms | 21ms |

| Avg P95 produce latency | 14ms | 13ms | |

Workload-2 (acks=1, high traffic) | Avg P99 produce latency | 9ms | 9ms |

| Avg P95 produce latency | 4ms | 4ms |

We can see there is a little overhead when tiered storage is turned on. This is expected, as the brokers have to ship segments to remote storage, and sync the remote segment metadata between brokers. With at-least-once (acks=all) produce, the produce latency is slightly increased when tiered storage is turned on. With acks=1 produce, the produce latency is almost not changed when tiered storage is turned on.

Test case 2 (out-of-sync consumers catching up):

In addition to the normal traffic, 9 out-of-sync consumers consume 180MB/s per broker (or 900MB/s in total) old data.

With tiered storage, the old data is read from HDFS. Without tiered storage, the old data is read from local disk.

| with tiered storage | without tiered storage | ||

|---|---|---|---|

Workload-1 (acks=all, low traffic) | Avg P99 produce latency | 42ms | 60ms |

| Avg P95 produce latency | 18ms | 30ms | |

Workload-2 (acks=1, high traffic) | Avg P99 produce latency | 10ms | 10ms |

| Avg P95 produce latency | 5ms | 4ms |

Consuming old data has a significant performance impact to acks=all producers. Without tiered storage, the P99 produce latency is almost tripled. With tiered storage, the performance impact is relatively lower, because remote storage reading does not compete the local hard disk bandwidth with produce requests.

Consuming old data has little impact to acks=1 producers.

Test case 3 (rebuild broker):

Under the normal traffic, stop a broker, remove all the local data, and rebuild it without replication throttling. This case simulates replacing a broken broker server.

| with tiered storage | without tiered storage | ||

|---|---|---|---|

Workload-1 (acks=all, 12TB data per broker) | Max avg P99 produce latency | 56ms | 490ms |

| Max avg P95 produce latency | 23ms | 290ms | |

| Duration | 2min | 230ms | |

Workload-2 (acks=1, 34TB data per broker) | Max avg P99 produce latency | 12ms | 10ms |

| Max avg P95 produce latency | 6ms | 5ms | |

| Duration | 4min | 520min |

With tiered storage, the rebuilt broker only need to fetch the latest data that has not been shipped to remote storage from the other brokers. Without tiered storage, the rebuilt broker has to fetch all the data that has not expired. With the same log retention time, tiered storage reduced the rebuild time by more than 100 times.

Without tiered storage, the rebuilt broker has to read a large amount of data from the local hard disks of the leaders. This competes page cache and local disk bandwidth with the normal traffic, and dramatically increases the acks=all produce latency.

Alternatives considered

Following alternatives were considered:

...