THIS IS A TEST INSTANCE. ALL YOUR CHANGES WILL BE LOST!!!!

...

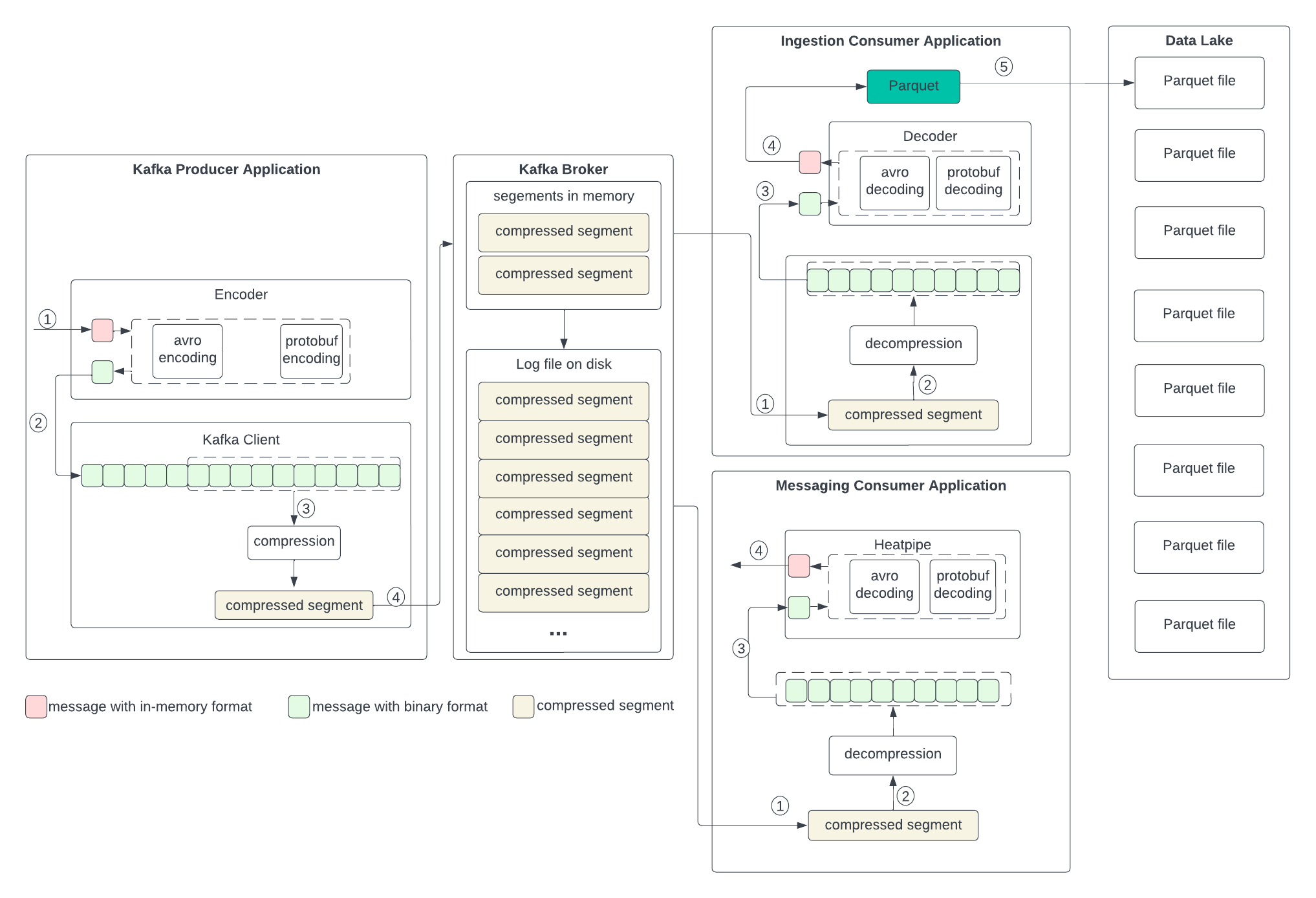

In the following diagram, we describe the proposed data format changes in each state and the process of the transformation. In short, we propose replacing compression with Parquet. Parquet combines encoding and compression at the segment level. The ingestion consumer is simplified by solely dumping the Parquet segment into the data lake.

Producer

The producer writes the in-memory data structures directly to the Kafka client and encodes and compresses all together.

...