...

| Section | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| ||||||||||

We add a new quorum state

|

...

trueif the epoch and offsets in the Pre-Vote request satisfy the same conditions requirements as a standard votefalseif otherwise

...

Yes. If a leader is unable to receive fetch responses from a majority of servers, it can impede followers that are able to communicate with it from voting in an eligible leader that can communicate with a majority of the cluster. This is the reason why an additional "Check Quorum" safeguard is needed which is what KAFKA-15489 implements. Check Quorum ensures a leader steps down if it is unable to receive fetch responses from a majority of servers.

Do we still need to reject VoteRequests received within fetch timeout if we have implemented Are Pre-Vote and Check Quorum enough to prevent disruptive servers?

Yes. Specifically we would be rejecting Not quite. We also want servers to reject Pre-Vote requests received within their fetch timeout. What this means in terms of state transitions is that Followers will not transition to Unattached when they learn of new elections with higher epochs. If they have heard from a leader within fetch timeout there is no need to This basically just means any server currently in Follower state should reject Pre-Votes because they have heard from a leader recently so there is no need to consider electing a new leader.

The following are two scenarios which explain show why Pre-Vote and Check Quorum are not enough to prevent disruptive servers. (The second scenario is out of scope and should will be covered by KIP-853: KRaft Controller Membership Changes or future work if not covered here.)

- Scenario A: We can image a scenario where two servers (S1 & S2) are both up-to-date on the log but unable to maintain a stable connection with each other. Let's say S1 is the leader. S2 may be When S2 loses connectivity with S1 and is unable to find the leader, it will start an election, and may get elected. Since S1 might be unable to find the new leader now, a Pre-Vote. Since its log may be up-to-date, a majority of the quorum may grant the Pre-Vote and S2 may then start and win a standard vote to become the new leader. This is already a disruption since S1 was serving the majority of the quorum well as a leader. When S1 loses connectivity with S2 again it will start an election and may get elected. This could continue in a cycle., and this bouncing of leadership could continue.

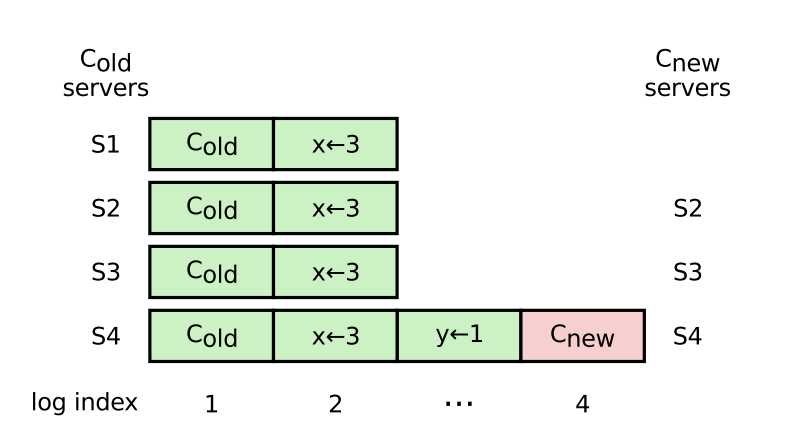

- Scenario B: A server in server in an old configuration (e.g. S1 in the below diagram pg. 41) starts a “pre-vote” when the leader is temporarily unavailable, and is elected because it is as up-to-date as the majority of the quorum. The Raft paper argues we can not rely on the original leader replicating fast enough to get past this scenario, however unlikely that it is. We remove S1 from the quorum - we can imagine some bug/limitation with quorum reconfiguration causes S1 to continuously try to reconnect with the quorum (i.e. start elections ) when the leader is trying to remove it from the quorum.

Rejecting Pre-Vote requests received within fetch timeout

As mentioned in the prior section, a server should reject Pre-Vote requests received from other servers if its own fetch timeout has not expired yet. The logic now looks like the following for servers receiving VoteRequests with PreVote set to true

The logic now looks like the following for servers receiving VoteRequests with PreVote set to true:

When servers receive VoteRequests with the PreVote When servers receive VoteRequests with the PreVote field set to true, they will respond with VoteGranted set to

trueif they haven't heard from a leader in fetch.timeout.ms and all conditions that normally need to be met for VoteRequests are satisfiedare not a Follower and the epoch and offsets in the Pre-Vote request satisfy the same requirements as a standard votefalseif they have heard from a leader in fetch.timeout.ms (could help cover Scenario A & B from above) or conditions that normally need to be met for VoteRequests are not satisfiedare a Follower or the epoch and end offsets in the Pre-Vote request do not satisfy the requirements- (Not in scope) To address the disk loss and 'Servers in new configuration' scenario, one option would be to have servers respond

falseto vote requests from servers that have a new disk and haven't caught up on replication

...

This will be tested with unit tests, integration tests, system tests, and TLA+.

Rejected Alternatives

Not adding a new quorum state for Pre-Vote

Adding a new state should keep the logic for existing states closer to their original behavior and prevent overcomplicating them. This could aid in debugging as well since we know definitively that servers in Prospective state are sending Pre-Votes, while servers in Candidate state are sending standard votes.

Rejecting VoteRequests received within fetch timeout (w/o Pre-Vote)

...