...

On September 2016, Facebook announced a new compression implementation named ZStandard, which is designed designed to scale with modern data processing environment. With its great performance in both of Speed speed and Compression compression rate, Hadoop and HBase will support ZStandard in a close future.

I propose for Kafka to add support of Zstandard compression, along with new configuration options and binary log format update.

Before we go further, it would be better to see the benchmark result of Zstandard. I compared the compressed size and compression time of 3 1kb-sized messages (3102 bytes in total), with the Draft-implementation of ZStandard Compression Codec and all currently available CompressionCodecs. You can see the benchmark code from this branch. All elapsed times are the average of 100 iterations, preceded by 5 warm up iterations. (To run the benchmark in your environment, move to jmh-benchmarks and run following command: ./jmh.sh -wi 5 -i 100 -f 1)

| Codec | Level | Size (byte) | Time (ms) | Description |

|---|---|---|---|---|

| Gzip | - | 396 | 0.083 ± 0.008 | |

| Snappy | - | 1,063 | 0.030 ± 0.001 | |

| LZ4 | - | 387 | 0.012 ± 0.001 | |

| Zstandard | 1 | 374 | 0.045 ± 0.003 | Speed-first setting. |

| 2 | 374 | 0.039 ± 0.001 | ||

| 3 | 379 | 0.057 ± 0.003 | Facebook's recommended default setting. | |

| 4 | 379 | 0.121 ± 0.013 | ||

| 5 | 373 | 0.081 ± 0.004 | ||

| 6 | 373 | 0.135 ± 0.016 | ||

| 7 | 373 | 0.688 ± 0.060 | ||

| 8 | 373 | 0.805 ± 0.072 | ||

| 9 | 373 | 1.038 ± 0.060 | ||

| 10 | 373 | 1.400 ± 0.099 | ||

| 11 | 373 | 2.515 ± 0.188 | ||

| 12 | 373 | 2.413 ± 0.195 | ||

| 13 | 373 | 2.889 ± 0.219 | ||

| 14 | 373 | 2.340 ± 0.030 | ||

| 15 | 374 | 1.943 ± 0.118 | ||

| 16 | 374 | 6.759 ± 0.625 | ||

| 17 | 371 | 3.045 ± 0.198 | ||

| 18 | 371 | 8.508 ± 0.787 | ||

| 19 | 368 | 8.721 ± 0.499 | ||

| 20 | 368 | 29.475 ± 2.456 | ||

| 21 | 368 | 54.713 ± 5.023 | ||

| 22 | 368 | 227.643 ± 18.390 | Size-first setting. |

lots of popular big data processing frameworks are supporting ZStandard.

- Hadoop (3.0.0) -

Jira server ASF JIRA serverId 5aa69414-a9e9-3523-82ec-879b028fb15b key HADOOP-13578 - HBase (2.0.0) -

Jira server ASF JIRA serverId 5aa69414-a9e9-3523-82ec-879b028fb15b key HBASE-16710 - Spark (2.3.0) -

Jira server ASF JIRA serverId 5aa69414-a9e9-3523-82ec-879b028fb15b key SPARK-19112

ZStandard also works well with Apache Kafka. Benchmarks with the draft version (with ZStandard 1.3.3, Java Binding 1.3.3-4) showed significant performance improvement. The following benchmark is based on Shopify's production environment (Thanks to @bobrik)

(Above: Drop around 22:00 is zstd level 1, then at 23:30 zstd level 6.)

As You can see, ZStandard outperforms with a compression ratio of 4.28x; Snappy is just 2.5x and Gzip is not even close in terms of both of ratio and speed.

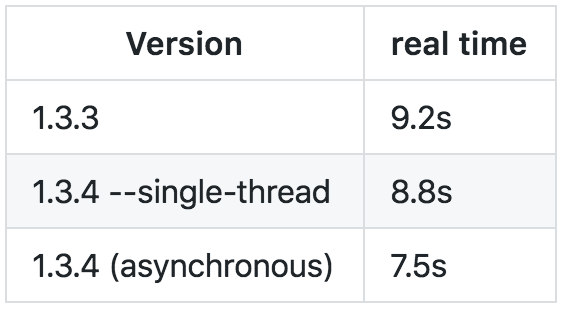

It is worth noting that this outcome is based on ZStandard 1.3. According to Facebook, ZStandard 1.3.4 improves throughput by 20-30%, depending on compression level and underlying I/O performance.

(Above: Comparison between ZStandard 1.3.3. vs. ZStandard 1.3.4.)

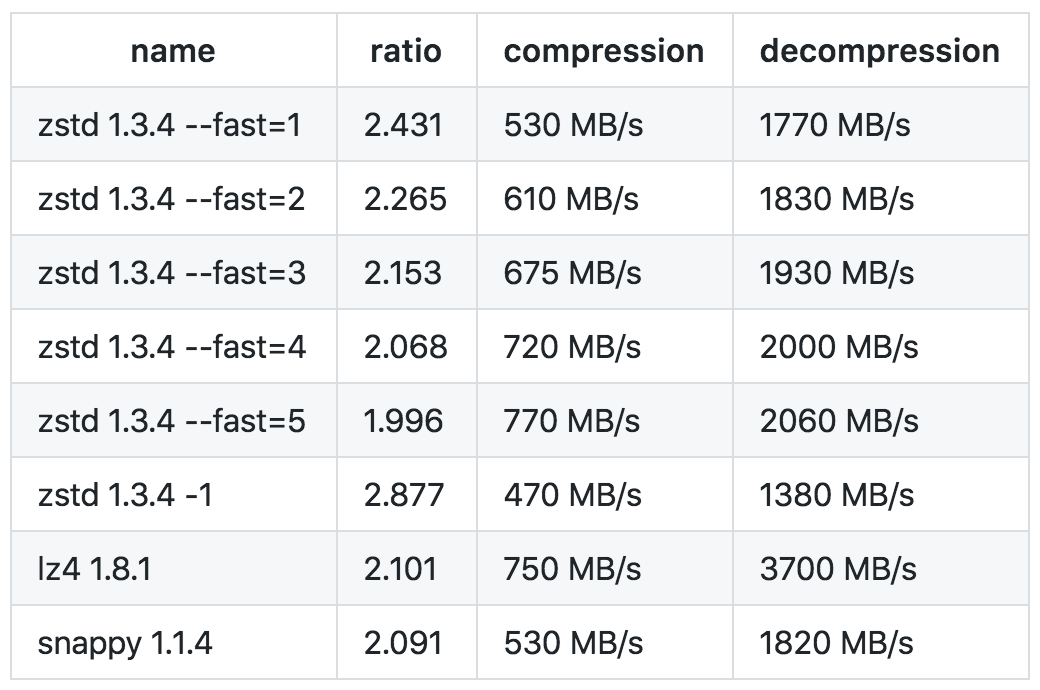

(Above: Comparison between other compression codecs, supported by Kafka.)

As of May 2018, Java binding for ZStandard 1.3.4 is still in progress; it will be updated before merging if this proposal is approved.

Accompanying Issues

However, supporting ZStandard is not just adding new compression codec; It introduces several issues related to it. We need to address those issues first:

Backward Compatibility

Since the producer chooses the compression codec by default, there are potential problems:

A. An old consumer that does not support ZStandard receives ZStandard compressed data from the brokers.

B. Old brokers that don't support ZStandard receives ZStandard compressed data from the producer.

To address the problems above, we have following options:

a. Bump the produce and fetch protocol versions in ApiKeys.

Advantages:

- Can guide the users to upgrade their client.

- Can support advanced features.

- Broker Transcoding: Currently, the broker throws UNKNOWN_SERVER_ERROR for unknown compression codec with this feature.

- Per Topic Configuration - we can force the clients to use predefined compression codecs only by configuring available codecs for each topic. This feature should be handled in separate KIP, but this approach can be a preparation.

Disadvantages:

- The older brokers can't make use of ZStandard.

- Short of a bump to the message format version.

b. Leave unchanged - let the old clients fail.

Previously added codecs, Snappy (commit c51b940) and LZ4 (commit 547cced), follow this approach. With this approach, the problems listed above ends with following error message:

| Code Block |

|---|

java.lang.IllegalArgumentException: Unknown compression type id: 4 |

Advantages:

- Easy to work: we need nothing special.

Disadvantages:

- The error message is a little bit short. Some users with old clients may be confused how to cope with this error.

c. Improve the error messages

This approach is a compromise of a and b. We can provide supported api version for each compression codec within the error message by defining a mapping between CompressionCodec and ApiKeys:

| Code Block |

|---|

NoCompressionCodec => ApiKeys.OFFSET_FETCH // 0.7.0

GZIPCompressionCodec => ApiKeys.OFFSET_FETCH // 0.7.0

SnappyCompressionCodec => ApiKeys.OFFSET_FETCH // 0.7.0

LZ4CompressionCodec => ApiKeys.OFFSET_FETCH // 0.7.0

ZStdCompressionCodec => ApiKeys.DELETE_GROUPS // 2.0.0 |

Advantages:

- Not so much work to do.

- Can guide the users to upgrade their client.

- Spare some room for advances features in the future, like Per Topic Configuration.

Disadvantages:

- The error message may still short.

Support Dictionary

Another issue worth bringing into play is the dictionary feature. ZStandard offers a training mode, which yields dictionary for compressing and decompression. It dramatically improves the compression ratio of small and repetitive input (e.g., semi-structured json), which perfectly fits into Kafka's case. (For real-world benchmark, see here) Although the details of how to adapt this feature into Kafka (example) should be discussed in the separate KIP, We need to leave room behindAs you can see above, ZStandard shows outstanding performance in both of compression rate and speed, especially working with the speed-first setting (level 1). To the extent that only LZ4 can be compared to ZStandard.

Public Interfaces

This feature requires modification on both of Configuration Options and Binary Log format.

...

- Add a new dependency on the Java bindings of ZStandard compression.

- Add a new value on CompressionType enum type and define ZStdCompressionCodec on kafka.message package.

- Add appropriate routine for the backward compatibility problem discussed above.

You can check the concept-proof implementation of this feature on this Pull Request.

Compatibility, Deprecation, and Migration Plan

NoneIt is entirely up to the community's decision for the backward compatibility problem.

Rejected Alternatives

None yet.

...