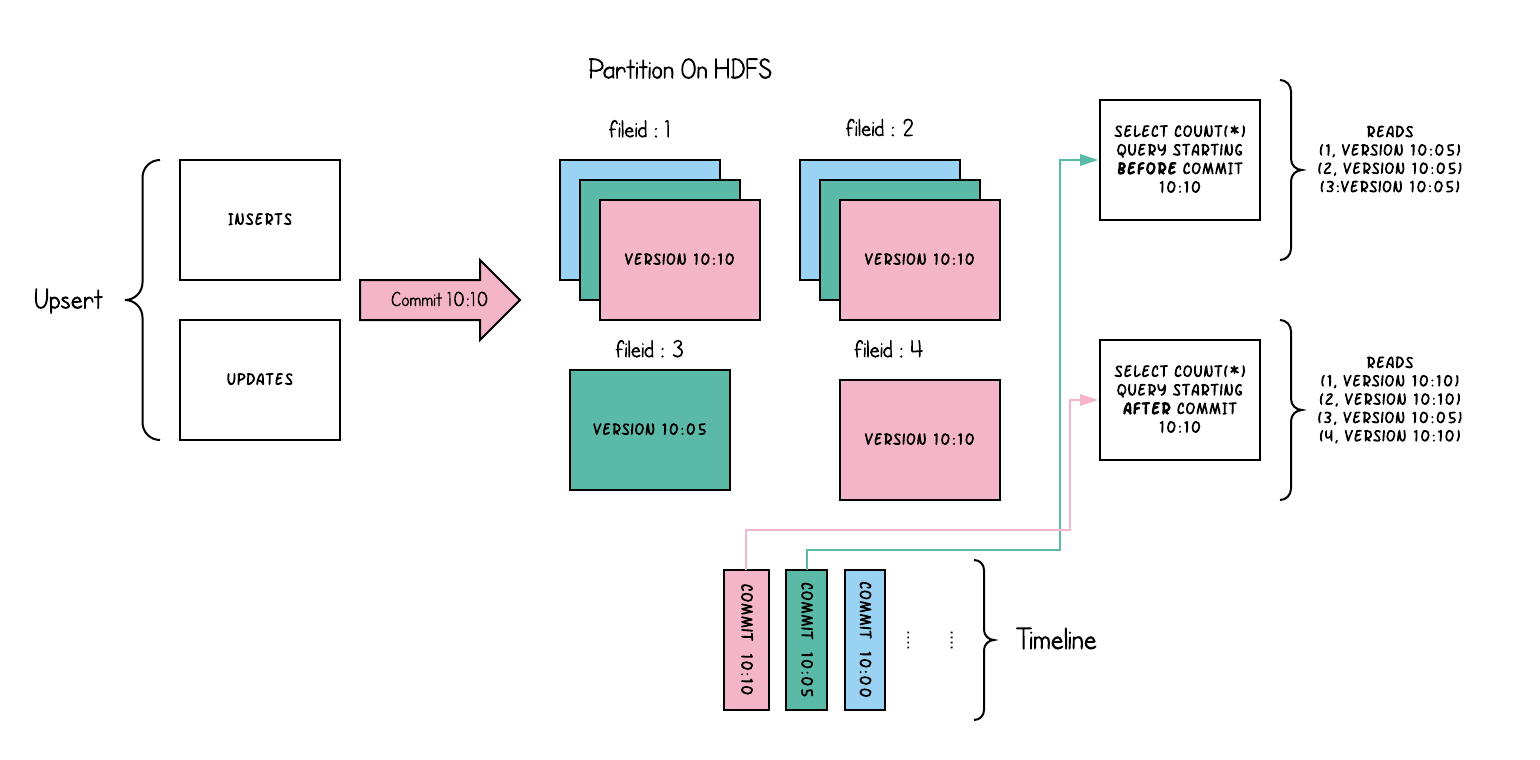

The Spark DAG for this storage, is relatively simpler. The key goal here is to group the tagged Hudi record RDD, into a series of updates and inserts, by using a partitioner. To achieve the goals of maintaining file sizes, we first sample the input to obtain a `workload profile` that understands the spread of inserts vs updates, their distribution among the partitions etc. With this information, we bin-pack the records such that Any remaining records after that, are again packed into new file id groups, again meeting the size requirements. In this storage, index updation is a no-op, since the bloom filters are already written as a part of committing data. In the case of Copy-On-Write, a single parquet file constitutes one `file slice` which contains one complete version of the file

A def~table-type where a def~table's def~commits are fully merged into def~table during a def~write-operation. This can be seen as "imperative ingestion", "compaction" of the happens right away. No def~log-files are written and def~file-slices contain only def~base-file.

THIS IS A TEST INSTANCE. ALL YOUR CHANGES WILL BE LOST!!!!

Overview

Content Tools

Apps