...

Add waitTimeMs in FetchResponse, and bump the FetchResponse and FetchRequest protocol version.

| Code Block |

|---|

FetchResponse => TransactionalId RequiredAcks Timeout [FetchableTopicResponse]

ThrottleTimeMs => INT32

WaitTimeMs => INT32

Timeout => INT32

ErrorCode => INT16

SessionId => INT32

FetchableTopicResponse => [Name [FetchablePartitionResponse] PreferredReadReplica Records]

...... |

Proposed Changes

We like to propose to track the real end to end fetch latency with those changes:

- Add waitTimeMs in FetchResponse()

- In Kafka API handler (in handleFetchRequest() function), when creating FetchResponse(), set the waitTimeMs as the time spent in purgatory

- In Follower broker, in processFetchRequest(), it will track the latency of fetch request and minus the waitTimeMs from FetchResponse.

- In FetcherStats, we will add a new histogram to track this calculated "true" fetch latency.

- We can also extend that to consumer fetch latency metrics.

The following figure illustrates the real end-to-end fetch latency. The grey box between the red circles is the waitTime subtracted from the fetch latency.

Gliffy Diagram macroId d7ab03b4-5318-4fc9-9227-b17eed54f89b displayName Real End-to-End Fetch Latency name Real End-to-End Fetch Latency pagePin 2

Additionally, at the leader side, we will Also, additionally, at leader side, we will also add a new metric called TotalLocalTime, which is TotalLocalTime = TotalTime - RemoteTime. This metric measures the time spent to process the fetch request on leader side, excluding the time spent in the purgatory.

Example

To better illustrate how the proposed fetch latency metric can be used for monitoring the latency between each pair of brokers, we simulated network latency by introducing artificial delays to the incoming packets on one broker of a 3-node cluster.

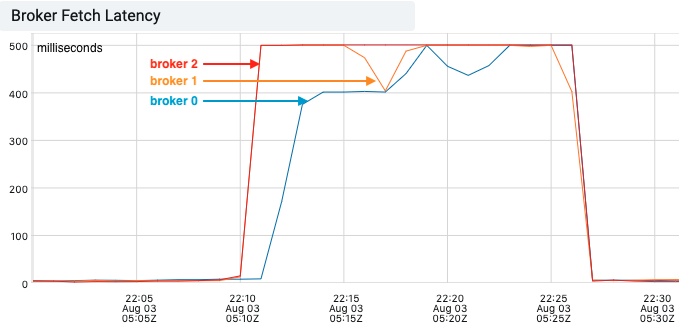

As the following graph shows, the broker fetch latency increased on all three brokers, pegging at 500ms(replica.fetch.wait.max.ms). This gave us very little information on where the slowness was introduced.

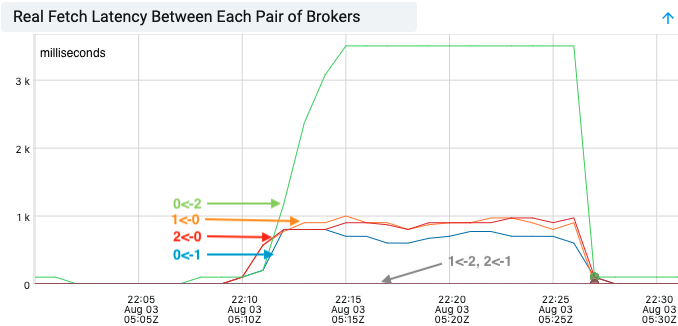

With the real end-to-end fetch latency, we can easily identify some slowness between broker 0 and broker 1, 2, but not between broker 1 and 2. This indicated broker 0 was the culprit.

Compatibility, Deprecation, and Migration Plan

Follow the standard protocol change update.

Rejected Alternatives

- End-to-end fetch latency including wait time

- Alternatively, we can track the end-to-end fetch latency at follower side, including the wait time spent in leader’s purgatory. This will avoid the protocol change in the FetchResponse. It is useful if the fetch latency is much greater than the replica.fetch.wait.max.ms. However, when the end-to-end fetch latency is close to replica.fetch.wait.max.ms, the time a fetch request stays in purgatory will skew the end-to-end fetch latency. It will be difficult to tell whether the high fetch latency is due to waiting or other reasons (slow networking, slow fetch processing).

- External Monitoring

- We do not add those metrics and instead use an external tool/process to monitor the network RTT between each pair of brokers. There are many tools for diagnosing network latency. However, most tools measure RTT by sending synthetic data packets between servers, which may not reflect the actual end-to-end fetch request latency.