Table of Contents

Status

Current state: Under DiscussionAccepted

Discussion thread: https://lists.apache.org/thread/8dqvfhzcyy87zyy12837pxx9lgsdhvft

Vote thread: https://lists.apache.org/thread/4pqjp8r7n94lnymv3xc689mfw33lz3mj

JIRA: Jira server ASF JIRA serverId 5aa69414-a9e9-3523-82ec-879b028fb15b key KAFKA-14127

...

The format sub-command in the the kafka-storage.sh tool already supports formatting more than one log directory — by expecting a list of configured log.dirs — and "formatting" only the ones that need so. When multiple log.dirs are configured, a new A new property will be included in meta.properties — directory.id — which will identify each log directory with a UUID. The UUID is randomly generated for each log directory.

meta.properties

When multiple log.dirs are configured, a A new property — — directory.id — will be expected in the meta.properties file in each log directory configured under log.dirs. The property indicates the UUID for the log directory where the file is located. If any of the meta.properties files does not contain directory.id one will be randomly generated and the file will be updated upon Broker startup. The kafka-storage.sh tool will be extended to generate this property as described in the previous section.

If log directory that holds the cluster metadata topic is configured separately to a different path — using metadata.log.dir — then this log directory is does not get a UUID assigned.

| Footnote |

|---|

The broker cannot run if this particular log directory is unavailable, and when configured separately it cannot host any user partitions, so there's no point in identifying it in the Controller. |

Metadata records

RegisterBrokerRecord and BrokerRegistrationChangeRecord will both have two new fields:

{ "name": "OnlineLogDirs", "type": "[]uuid", "versions": "3+", "taggedVersions": "3+", "tag": "0",

"about": "Log directories configured in this broker which are available." },

{ "name": "OfflineLogDirs", "type": "bool", "versions": "3+",

"about": "Whether any log directories configured in this broker are not available." }

PartitionRecord and PartitionChangeRecord will both have a new Assignment field which replaces the current Replicas field:

...

Reserved UUIDs

The following UUIDs are excluded from the random pool when generating a log directory UUID:

UUID.UNASSIGNED_DIR–new Uuid(0L, 0L)– used to identify new or unknown assignments.UUID.LOST_DIR-new Uuid(0L, 1L)– used to represent unspecified offline directories.UUID.MIGRATING_DIR-new Uuid(0L, 2L)– used when transitioning from a previous state where directory assignment was not available, to designate that some directory was previously selected to host a partition, but we're not sure which one yet.

The first 100 UUIDs, minus the three listed above are also reserved for future use.

Metadata records

RegisterBrokerRecord and BrokerRegistrationChangeRecord will have a new field:

{ "name": "AssignmentLogDirs", "type": "[]ReplicaAssignmentuuid", "versions": "13+",

"abouttaggedVersions": "The replicas of this partition, sorted by preferred order.", "fields": [

{ "name": "Broker", "type": "int32", "versions": "13+", "entityTypetag": "brokerId0",

"about": "TheLog directories configured in this broker IDwhich hostingare the replicaavailable." },

PartitionRecord and PartitionChangeRecord will both have a new Directories field

{ "name": "DirectoryDirectories", "type": "[]uuid", "versions": "1+",

"taggedVersionsabout": "+1", "tag": "0",

"about": "The log directory hosting the replica" }

]}

The log directory hosting each replica, sorted in the same exact order as the Replicas field."}

Although not explicitly specified in the schema, the default value for Directory is Uuid.UNASSIGNED_DIR (Uuid.ZERO), as that's the default default value for UUID types.

| Footnote |

|---|

Yes, double default, not a typo. The default setting, for the default value of the field. |

RPC requests

BrokerRegistrationRequest will include the following two new fields:

...

A directory assignment to Uuid.UNASSIGNED_DIR conveys that the log directory is not yet known, the hosting Broker will eventually determine the hosting log directory and use AssignReplicasToDirs to update this the assignment.

RPC requests

BrokerRegistrationRequest will include the following new field:

{ "name": "OfflineLogDirsLogDirs", "type": "bool[]uuid", "versions": "2+",

"about": "Whether any log "Log directories configured in this broker which are not available." }

BrokerHeartbeatRequest will include the following new field:

...

{

"apiKey": <TBD>,

"type": "request",

"listeners": ["controller],

"name": "AssignReplicasToDirsRequest",

"validVersions": "0",

"flexibleVersions": "0+",

"fields": [

{ "name": "BrokerId", "type": "int32", "versions": "0+", "entityType": "brokerId",

"about": "The ID of the requesting broker" },

{ "name": "BrokerEpoch", "type": "int64", "versions": "0+", "default": "-1",

"about": "The epoch of the requesting broker" },

{ "name": "Directories", "type": "[]DirectoryData", "versions": "0+", "fields": [

{ "name": "Id", "type": "uuid", "versions": "0+", "about": "The ID of the directory" },

{ "name": "Topics", "type": "[]TopicData", "versions": "0+", "fields": [

{ "name": "NameTopicName", "type": "stringuuid", "versions": "0+", "entityType": "topicName",

"about": "The name of the assigned topic" },

{ "name": "Partitions", "type": "[]PartitionData", "versions": "0+", "fields": [

{ "name": "PartitionIndex", "type": "int32", "versions": "0+",

"about": "The partition index" }

]}

]}

]}

]

}

{

"apiKey": <TBD>,

"type": "response",

"name": "AssignReplicasToDirsResponse",

"validVersions": "0",

"flexibleVersions": "0+",

"fields": [

{ "name": "ThrottleTimeMs", "type": "int32", "versions": "0+",

"about": "The duration in milliseconds for which the request was throttled due to a quota violation, or zero if the request did not violate any quota." },

{ "name": "ErrorCode", "type": "int16", "versions": "0+",

"about": "The top level response error code" },

{ "name": "Directories", "type": "[]DirectoryData", "versions": "0+", "fields": [

{ "name": "Id", "type": "uuid", "versions": "0+", "about": "The ID of the directory" },

{ "name": "Topics", "type": "[]TopicData", "versions": "0+", "fields": [

{ "name": "NameTopicId", "type": "stringuuid", "versions": "0+", "entityType": "topicName",

"about": "The name of the assigned topic" },

{ "name": "Partitions", "type": "[]PartitionData", "versions": "0+", "fields": [

{ "name": "PartitionIndex", "type": "int32", "versions": "0+",

"about": "The partition index" },

{ "name": "ErrorCode", "type": "int16", "versions": "0+",

"about": "The partition level error code" }

]}

]}

]}

]

}

]

}

A AssignReplicasToDirs request including an assignment to Uuid.LOST_DIR conveys that the Broker is wanting to correct a replica assignment into a offline log directory, which cannot be identified.

This request is authorized with CLUSTER_ACTION on CLUSTER.

Proposed changes

Metrics

| MBean name | Description |

|---|---|

| kafka.server:type=KafkaServer,name=NumMismatchingReplicaToLogDirAssignmentsQueuedReplicaToDirAssignments | The number of replicas hosted by the broker that are either missing a log directory assignment in the cluster metadata or are currently found in a different log directory.by the broker that are either missing a log directory assignment in the cluster metadata or are currently found in a different log directory and are queued to be sent to the controller in a |

Configuration

The following configuration option is introduced

Name | Description | Default | Valid Values | Priority |

|---|---|---|---|---|

| If the broker is unable to successfully communicate to the controller that some log directory has failed for longer than this time, and there's at least one partition with leadership on that directory, the broker will fail and shut down. | 30000 (30 seconds) | [1, …] | low |

Storage format command

The format subcommand will be updated to ensure each log directory has an assigned UUID and it will persist a new property directory.id in the meta.properties file when multiple log.dirs are configured. The value is base64 encoded, like the cluster UUID.

...

| Footnote |

|---|

If an existing, non JBOD KRaft cluster is upgraded to the first version that includes the changes described in this KIP, which write these new fields, and is later downgraded, the |

...

When the broker starts up and initializes LogManager, if multiple log.dirs are configuredand initializes LogManager, it will load the UUID for each log directory (directory.id ) by reading the meta.properties file at the root of each of them.

- If there are any two log directories with the same UUID, the broker Broker will fail at startup

- If there are any

meta.propertiesfiles missingdirectory.id, a new UUID is generated, and assigned to that log directory by updating themeta.propertiesfile.

The set of all loaded log directory UUIDs is sent along in the broker registration request to the controller as the OnlineLogDirs LogDirs field. If any configured log directories is unavailable, OfflineLogDirs is set to true.

Metadata caching

Currently, Replicas are considered offline if the hosting broker is offline. Additionally, replicas will also be considered offline if the hosting broker is offline, or if the replica references a log directory UUID (in the new field partitionRecord.Directories) that is not present in the hosting Broker's latest registration under LogDirs and either:

- the log directory UUID is

UUID.LOST_DIR - the hosting broker's registration indicates multiple online log directories. i.e.

brokerRegistration.LogDirs.length > 1

If neither of the above conditions are true, we assume that there is only one log directory configured, the broker is not configured with multiple log directories, replicas all live in the same directory and neither log directory assignments nor log directory failures shall be communicated to the Controller. flags offline log directories and the replica references none of the registered online log directories.

Handling log directory failures

When one or more multiple log directories are configured, and some (but not all) of them become offline, the broker will communicate this change using the new field OfflineLogDirs in the BrokerHeartbeat request — — indicating the UUIDs of the new offline log directories. The UUIDs for the newly accumulated failed log directories are included in the every BrokerHeartbeat request until the broker sees them correctly identified as offline in a BrokerRegistrationChangeRecord.restarts. If the Broker is configured with a single log directory, this field isn't used, as the current behavior of the broker is to shutdown when no log directories are online.

Log directory failure notifications are queued and batched together in all future broker heartbeat requests.

If the Broker repeatedly fails to communicate a log directory failure, or a replica assignment into a failed directory, after a configurable amount of time — log.dir.failure.timeout.ms — and it is the leader for any replicas in the failed log directory the broker will shutdown, as that is the only other way to guarantee that the controller will elect a new leader for those partitionsBecause the broker is proactive in communicating any log directory assignment changes to the controller, the metadata should be up to date and correct when the controller is notified of a failed log directory. However, the consequences of some partition assignment being incorrect – due to some error or race condition - can be quite damaging, as the controller might not know to update leadership for that partition, leaving it unavailable for an indefinite amount of time. So, as a fallback mechanism, when handling a runtime directory failure, the broker must verify the assignments for the newly failed partitions against the latest metadata, and for any incorrect assignments, the broker will use AlterReplicaLogDirs to assign them to UUID.Zero so that the controller can update leadership and ISR.

Replica management

When configured with multiple log.dirs, as the broker catches broker catches up with metadata, and sees the partitions which it should be hosting, it will check the associated log directory UUID for each partition (partitionRecord.Directories).

- If the partition is not assigned to a log directory (refers to

Uuid.ZEROUNASSIGNED_DIR)- If the partition already exists, the broker uses the new RPC —

AssignReplicasToDirs— to notify the controller to change the metadata assignment to the actual log directory. - If the partition does not exist, the broker selects a log directory and uses the new RPC —

AssignReplicasToDirs— to notify the controller to create the metadata assignment to the actual log directory.

- If the partition already exists, the broker uses the new RPC —

- If the partition is assigned to an online log directory

- If the partition does not exist it is created in the indicated log directory.

- If the partition already exists in the indicated log directory and no future replica exists, then no action is taken.

- If the partition already exists in the indicated log directory, and there is a future replica in another log directory, then the broker starts the process to replicate the current replica to the future replica.

- If the partition already exists in another online log directory and is a future replica in the log directory indicated by the metadata, the broker will replace the current replica with the future replica after making sure that the future replica is fully caught up with the current replica.

- If the partition already exists in another online log directory, the broker uses the new RPC —

AssignReplicasToDirs— to the controller to change the metadata assignment to the actual log directory. The partition might have been moved to a different log directory whilst the broker was offline.

- If the partition is assigned to an unknown log directory or refers to

Uuid.LOST_DIR- If there are offline log directories, no action is taken — the assignment refers to a a log directory which may be offline, we don't want to fill the remaining online log directories with replicas that existed in the offline ones.

- If there are no offline directories, no action is taken either — the assignment refers to a log directory which was removed from configuration. The Controller will reassign the replica to UUID.Zero, so that a new log directory may be chosenthe broker selects a log directory and uses the new RPC —

AssignReplicasToDirs— to notify the controller to create the metadata assignment to the actual log directory.

If instead, a single entry is configured under log.dirs or log.dir, then the AssignReplicasToDirs RPC is only sent to correct assignments to UUID.LOST_DIR, as described above.

If the broker is configured with multiple log directories it remains FENCED until it can verify that all partitions are assigned to the correct log directories in the cluster metadata. This excludes the log directory that hosts the cluster metadata topic, if it is configured separately to a different path — using metadata.log.dir.

Assignments to be sent via AssignReplicasToDirs are queued and batched together, handled by a log directory event manager that also handles log directory failure notifications.

Intra-broker replica movement

...

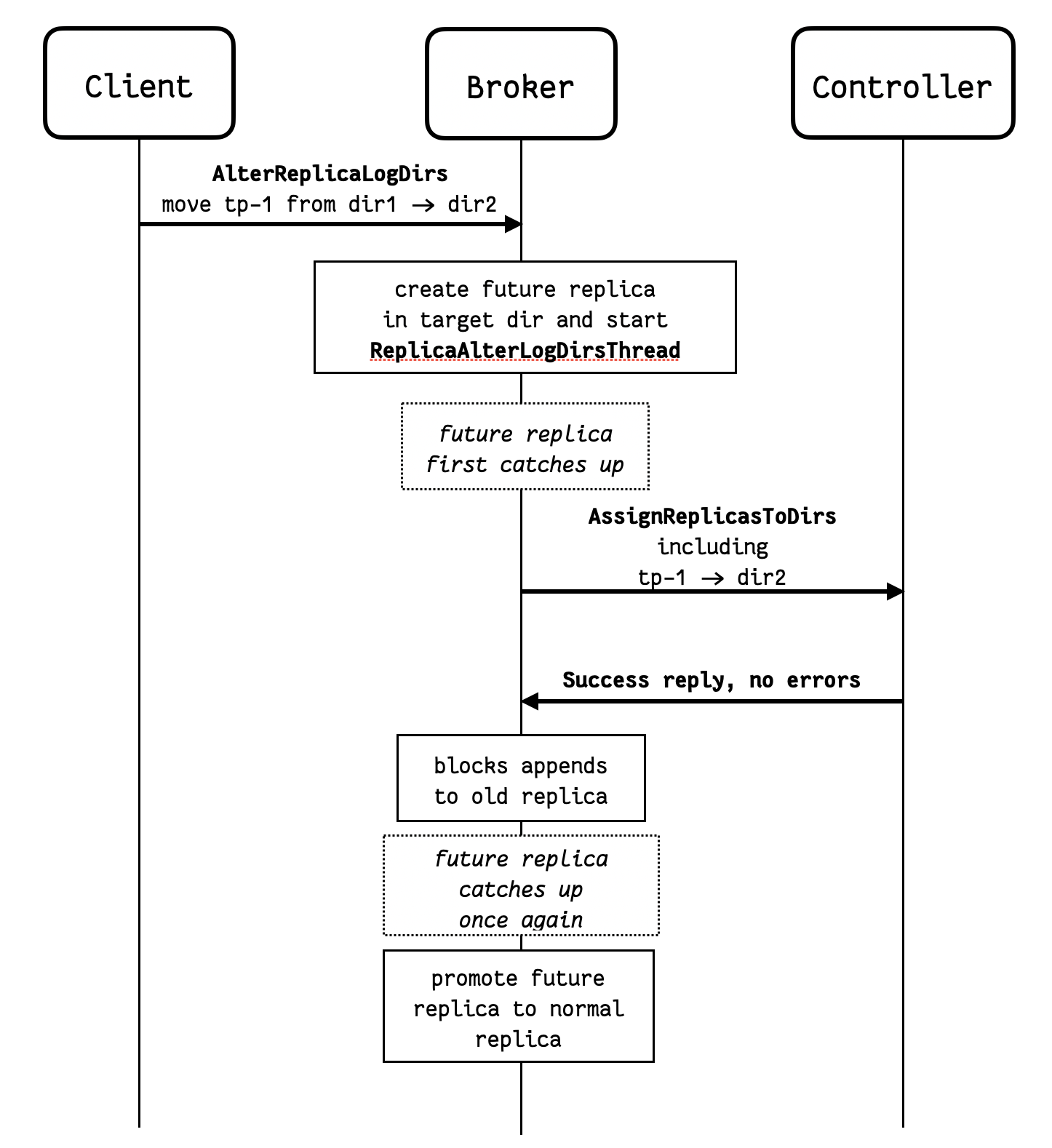

The existing AlterReplicaLogDirs RPC is sent directly to the broker in question, which starts moving the replicas using a AlterReplicaLogDirs threads – ReplicaAlterLogDirsThread – this remains unchanged. But when the future replica first catches up with the main replica, instead of immediately promoting the future replica, the broker will:

- Asynchronously communicate the log directory change to the controller using the new RPC –

AssignReplicasToDirs. - Keep the

AlterReplicaLogDirsthread goingReplicaAlterLogDirsThreadgoing. The future replica is still the future replica, and it continues to copy from the main replica – which still in the original log directory – as new records are appended.

Once the broker receives confirmation of the metadata change – indicated by fetching cluster metadata, and noticing a new PartitionChangeRecord which reflects the log directory assignment change for the moving replica – a successful response to AssignReplicasToDirs – then it will:

- Block appends to the main (old) replica and waits for the future replica to fully catch up once again.

- Makes the switch, promoting the future replica to main replica and cleaning up the old one.

...

The diagram below illustrates the sequence of steps involved in moving a replica between log directories.

In the diagram above, notice that if dir1 fails after the AssignReplicasToDirs RPC is sent, but before the future replica is promoted, then the controller will not know to update leadership and ISR for the partition. This is why it is important to have the fallback mechanism described in the"Handling log directory failures" section above – if any partitions become offline and are not correctly assigned to the offline directory in the cluster metadata (from the Broker's point of view), then If the destination directory has failed, it won't be possible to promote the future replica, and the Broker needs to revert the assignment (cancelled locally if still queued). If the source directory has failed, then the future replica might not catch up, and the Controller might not update leadership and ISR for the partition. In this exceptional case, the broker issues a AssignReplicasToDirs RPC to the Controller to both correct the assignment and let it to assignment the replica to UUID.LOST_DIR - this lets the Controller know that it needs to update leadership and ISR for this partition too.

Controller

Replica placement

For any new partitions, the active controller will use Uuid.ZEROUNASSIGNED_DIR as the initial value for log directory UUID for each replica – this is the default (empty) value for the tagged field. Each broker with multiple log.dirs hosting replicas then assigns a log directory UUID and communicates it back to the active controller using the new RPC AssignReplicasToDirs so so that cluster metadata can be updated with the log directory assignmentlog directory assignment. Brokers that are configured with a single log directory to not send this RPC.

Handling log directory failures

When a controller receives a BrokerHeartbeatBrokerHeartbeat request from a broker that indicates any UUIDs under the new OfflineLogDirs field, it will:

- Persist a

BrokerRegistrationChangerecord, with the new list of online log directories and update the offline log directories flag. - Update the Leader and ISR for all the replicas assigned to the failed log directories, persisting

PartitionChangeRecords, in a similar way to how leadership and ISR is updated when a broker becomes fenced, unregistered or shuts down.

...

The controller accepts the AssignReplicasToDirs RPC and persists the assignment into metadata records.

If the indicated log directory UUID is not a registered log directory then the call fails with error 57 — LOG_DIR_NOT_FOUND .If the indicated log directory UUID is UUID.Zeroone of the Broker's online log directories, then the replica is considered offline and the leader and ISR is updated accordingly, same as when the BrokerHeartbeat indicates a new offline log directory. This should only happen in the exceptional case that a Broker's metadata cache shows an incorrect assignment for some replica during the handling of a failure for the actual directory that hosts that replicaoffline log directory.

Broker registration

Upon a broker registration request the controller will persist the broker registration as cluster metadata including the online log directory list and offline log directories flag for that broker. The controller may receive a new list of online directories and offline log directories flag — different from what was previously persisted in the cluster metadata for the requesting broker.the requesting broker.

- If there are no indicated online log directory UUIDs the request is invalid and the controller replies with an error 42 –

INVALID_REQUEST - If there are no indicated online log directory UUIDs the request is invalid and the controller replies with an error —

INVALID_REQUEST. - If the offline log directories flag is false and there are any missing log directories this means those have been removed from the broker’s configuration, so the controller will reassign all replicas currently assigned to the missing log directories to

Uuid.ZEROto delegate the choice of log directory the broker, which will then report the choice via the AssignReplicasToDirs RPC. If multiple log directories are registered the broker will remain fenced until the controller learns of all the partition to log directory placements in that broker - i.e. no remaining replicas assigned to

Uuid.ZEROUNASSIGNED_DIR. The broker will indicate these using the AssignReplicasToDirs RPC.- The broker remains fenced by not wanting to unfence itself in heartbeat requests until the number of mismatching replica to log directory assignments is zero. This number is represented by the new metric

NumMismatchingReplicaToLogDirAssignmentsQueuedReplicaToDirAssignments.

- The broker remains fenced by not wanting to unfence itself in heartbeat requests until the number of mismatching replica to log directory assignments is zero. This number is represented by the new metric

- If multiple log directories are registered and some of them are new (not present in previous registration) then these log directories are assumed to be empty. If they are not, the broker will use the

AssignReplicasToDirsRPC to correct assignment and choose not to become UNFENCED before the metadata is correct.

...

- As per KIP-866, a separate Controller quorum is setup first, and only then the existing brokers are reconfigured and upgraded.

- When configured for the migration and while still in ZK mode, brokers will:

- update meta.properties to generate and include

directory.id; - send

BrokerRegistrationRequestincluding the log directory UUIDs; - shutdown if any directory fails;

- sends assignments via the

AssignReplicasToDirsRPCnotify the controller of log directory failures via BrokerHeartbeatRequest.

- update meta.properties to generate and include

- During the migration, the controller:

- persists log directories indicated in broker registration requests in the cluster metadata;

- relies on heartbeat requests to detect log directory failure instead of monitoring the ZK znode for notifications;

- registration requests in the cluster metadata;

- persists directory assignments received via the

AssignReplicasToDirsRPCstill uses fullLeaderAndIsrrequests to process log directory failures for any brokers still running in ZK mode.

- The brokers restarting into KRaft mode will want to stay fenced until their log directory assignments for all hosted partitions are persisted in the cluster metadata.

- The active controller will also ensure that any given broker stays fenced until it learns of all partition to log directory assignments in that specific broker via the new

AssignReplicasToDirsRPC. - During the migration, existing replicas are assumed and assigned to log directory

Uuid.ZEROuntilMIGRATING_DIRuntil the actual log directory is learnt by the active controller from a broker running in KRaft mode.

...

- Assumed to live in a broker that isn’t yet configured with multiple log directories, and so live in a single log directory, even if the UUID for that directory isn’t yet known by the controller. It is not possible to trigger a log directory failure from a broker that has a single log directory, as the broker would simply shut down if there are no remaining online log directories. Or

- Assigned to a log directory as of yet unknown, in a broker that remains FENCED. As the broker remains FENCED it cannot assume leadership for any partition, and so a log directory failure would be handled by the current partition leader.

...

- Keeping the scope of the log directory to the broker — while this would mean a much simpler change, as was proposed in KIP-589, if only the broker itself knows which partitions were assigned to a log directory, when a log directory fails the broker will need to send a potentially very large request enumerating all the partitions in the failed disk, so that the controller can update leadership and ISRs accordingly.

- Having the controller determine the log directory for new replicas — this would avoid a further RPC from the broker upon selecting a new log directory for new replicas, and reduce the time until it is safe for the broker to take leadership of the replica. However the broker is in a better position to make a choice of log directory than the controller, as it has easier access to e.g. disk usage in each log directory. The controller could also have this information if the broker were to include it the broker registration. But to keep this change manageable and timely, this optimization is best left for future work. It would also be trickier to manage the ZK→KRaft migration if we had some brokers forwarding the RPC to the controllers and others handling it directly.

- Changing how log directory failure is handled in ZooKeeper mode — ZooKeeper mode is going away, KIP-833 proposed its deprecation in a near future release.

- Using the system path to identify each log directory, or storing the identifier somewhere else — When running Kafka with multiple log directories, each log directory is typically assigned to a different system disk or volume. The same storage device can be made accessible under a different mount, and Kafka should be able to identify the contents as the same disk. Because the log directory configuration can change on the broker, the only reliable way to identify the log directory in each broker is to add metadata to the file system under the log directory itself.

Identifying offline log directories. Because we cannot identify them by mount paths, we cannot distinguish between an inaccessible log directory is simply unavailable or if it has been removed from configured – think of a scenario where one log dir is offline and one was removed from

log.dirs. In ZK mode we don't care to do this, and we shouldn't do it in KRaft either. What we need to know is if there are any offline log directories, to prevent re-streaming the offline replicas into the remaining online log dirs. In ZK mode, the 'isNew' flag is used to prevent the Broker from creating partitions when any logdir is offline unless they're new. A simple boolean flag to indicate some log dir is offline is enough to maintain the functionality.

...