Introduction

Kafka implements several request types that cannot immediately be answered with a response. Examples:

- A produce request with acks=all cannot be considered complete until all replicas have acknowledged the write and we can guarantee it will not be lost if the leader fails.

- A fetch request with min.bytes=1 won't be answered until there is at least one new byte of data for the consumer to consume. This allows a "long poll" so that the consumer need not busy wait checking for new data to arrive.

These requests are considered complete when either (a) the criteria they requested is complete or (b) some timeout occurs.

We intend to expand the use of this delayed request facility for various additional purposes including partition assignment and potentially quota enforcement.

The number of these asynchronous operations in flight at any time scales with the number of connections, which for Kafka is often tens of thousands.

A naive implementation of these would simply block the thread on the criteria, but this would not scale to the high number of in flight requests Kafka has.

The current approach uses a data structure called the "request purgatory". The purgatory holds any request that hasn't yet met its criteria to succeed but also hasn't yet resulted in an error. This structure holds onto these uncompleted requests and allows non-blocking event-based generation of the responses. This approach is obviously better than having a thread per in-flight request but our implementation of the data structure that accomplishes this has a number of deficiencies. The goal of this proposal is to improve the efficiency of this data structure.

Current Design

The request purgatory consists of a timeout timer and a hash map of watcher lists for event driven processing. A request is put into a purgatory when it is not immediately satisfiable because of unmet conditions. A request in the purgatory is completed later when the conditions are met or is forced to be completed (timeout) when it passed beyond the time specified in the timeout parameter of the request. Currently (0.8.x) it uses Java DelayQueue to implement the timer and Java LinkedList for a watcher list.

When a request is completed, the request is not deleted from the timer or watcher lists immediately. Instead, completed requests are deleted as they were found during condition checking. When the deletion does not keep up, the server may exhaust JVM heap and cause OutOfMemoryError. To alleviate the situation, the reaper thread purges completed requests from the purgatory when the number of requests in the purgatory (including both pending or completed requests) exceeds the configured number. The purge operation scans the timer queue and all watcher lists to find completed requests and deletes them.

By setting this configuration parameter low, the server can virtually avoid the memory problem. However, the server must pay a significant performance penalty if it scans all lists too frequently.

New Design

The goal of the new design is to allow immediate deletion of a completed request and eliminate the expensive purge process. It requires cross referencing of entries in the timer and the requests. Also it is strongly desired to have O(1) insert/delete cost since insert/delete operation happens for each request/completion.

To satisfy these requirements, we propose a new purgatory implementation based on Hierarchical Timing Wheels and a new watcher list implementation based on WeakReference.

Hierarchical Timing Wheel

Doubly Linked List for Buckets in Timing Wheels

Driving Clock using DelayQueue

A simple implementation may use a thread that wakes up every unit time and do the ticking, which checks if there is any task in the bucket. This can be wasteful if requests are sparse. We want the thread to wake up only when when there is a non-empty bucket to expire. We will do so by using java.util.concurrent.DelayQueue similarly to the current implementation, but we will enqueue task buckets instead of tasks. This design has a performance advantage. The number of items in DelayQueue is capped by the number of buckets, which is usually much smaller than the number of tasks, thus the number of offer/poll operations to the priority queue inside DelayQueue will be significantly smaller.

WeakReference based Watcher List

Removing completed requests from watcher lists is a major pain. The problem is that completed requests are not garbage-collected if we don't remove them from the list. Thus, we monitor the number of outstanding watchers and trigger purge operation by it. Unfortunately tuning of it is not trivial for a large system.

We propose to use a list implementation that uses WeakReference, where a list element is pointed by a weak reference from the list entry. Since it is weak references, a request pointed by one can be reclaimed by GC if there are no strong references in the system. while a request is waiting for completion, there is a strong reference to it from the timer. There shouldn't be any other strong reference. Once completed the strong reference will be cleared, and the request will be reclaimed eventually. The cleared reference will be seen as a null element.

Although completed requests are garbage-collected automatically, there remain list entries in watcher lists. How do we know when to purge the lists? One idea is to use ReferenceQueue. JVM puts a reference object into reference queue when the reference is cleared. By pulling from the reference queue, we know how many cleared weak references are potentially in the lists. We can do the pulling and counting in ExpiredOperationReaper and tryCompleteWatched.

ExpiredOperationReaper pulls weak references from the reference queue and increment the counter until the queue is emptied. If the final count is greater than some threshold, ExpiredOperationReaper reset the counter to zero and traverses the list to remove all null entries. tryCompleteWatched, on the other hand, drains the reference queue at the beginning and set the counter to zero. Then, just like the current implementation, tryCompleteWatched traverses through the list and removes null entries and entries of completed requests. After a traversal, the list is clean except for potential newly cleared entries. Such new cleared entries will be detected through the reference queue.

Parameters

- the tick size (the minimum time unit)

- the wheel size (the number of buckets per wheel)

Benchmark

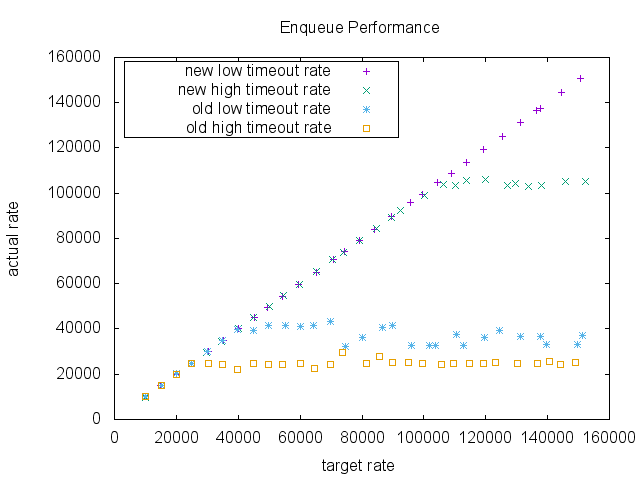

We compared the enqueue performance of two purgatory implementation, the current implementation and the proposed new implementation. This is a micro benchmark. It measures the purgatory enqueue performance. The purgatory was separated from the rest of the system and also uses a fake request which does nothing useful. So, the throughput of the purgatory in a real system may be much lower than the number shown by the test.

In the test, the intervals of the requests are assumed to follow the exponential distribution. Each request takes a time drawn from a log-normal distribution. By adjusting the shape of the log-normal distribution, we can test different timeout rate.

The tick size is 1ms and the wheel size is 20. The timeout was set to 200ms. The data size of a request was 100 bytes. For a low timeout case, we chose 75percentile = 60ms and 50percentile = 20. And for a high timeout case, we chose 75percentile = 400ms and 50percentile = 200ms.

The result shows a dramatic difference in a high enqueue rate area. As the target rate increases, both implementations keep up with the requests initially. However, the old implementation was saturated around 60000 request per second, whereas the proposed implementation didn't show any significant performance degradation in this benchmark.Another thing worth noting is that there was a performance degradation in the current implementation when timeouts happen more often. It may be because the current implementation has to purge the delay queue and watch lists more often. The proposed implementation shows a slight degradation only when the enqueue rate was very high.

Summary

In the new design, we use Hierarchical Timing Wheels for the timeout timer, WeakReference based list for watcher lists, and DelayQueue of timer buckets to advance the clock on demand. The advantage is that a completed request is removed from the timer queue immediately and becomes GCable. When a request is completed we will immediately remove it from a timer bucket with O(1) cost. A strong reference to a request is cleared at this point. All remaining references are weak references from watcher lists, so completed requests become GCable right away. The buckets remain in the delay queue. However, the number of buckets is bounded. And, in a healthy system, most of the requests are satisfied before timeout. Many of the buckets become empty before pulled out of the delay queue. Also, the timer should rarely have the buckets of the lower interval. We expect a improvement in GC behavior and lower CPU usage.